TikTok’s $300 billion-valued father or mother firm, ByteDance, is among the world’s busiest AI builders. It plans to spend billions of {dollars} on AI chips this yr, whereas its tech provides Sam Altman’s OpenAI a run for its cash.

ByteDance’s Duobao AI chatbot is presently the preferred AI assistant in China, with 78.6 million month-to-month energetic customers as of January.

This makes it the world’s second most-used AI app behind OpenAI’s ChatGPT (with 349.4 million MAUs). The not too long ago launched Doubao-1.5-pro is claimed to match the efficiency of OpenAI’s GPT-4o at a fraction of the associated fee.

As Counterpoint Analysis notes on this breakdown of Duobao’s positioning and performance, “very like its worldwide rival ChatGPT, the cornerstone of Doubao’s enchantment is its multimodality, providing superior textual content, picture, and audio processing capabilities”.

It might additionally generate music.

In September, ByteDance added an AI music technology operate to the Duobao app, which apparently “helps greater than ten forms of music kinds and lets you write lyrics and compose music with one click on”.

This, although, isn’t the tip of ByteDance’s fascination with constructing music AI applied sciences.

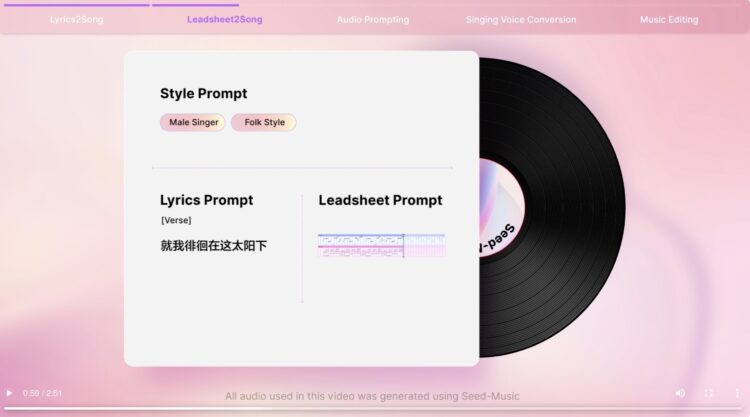

On September 18, ByteDance’s Duobao Staff introduced the massive launch of a set of AI music fashions dubbed Seed-Music.

Seed-Music, they claimed, would “empower folks to discover extra potentialities in music creation”.

Established in 2023, the ByteDance Doubao (Seed) Staff is “devoted to constructing industry-leading AI basis fashions”.

Based on the official launch announcement for Seed-Music in September, the AI music product “helps score-to-song conversion, controllable technology, music and lyrics modifying, and low-threshold voice cloning”.

It additionally claims that “it cleverly combines the strengths of language fashions and diffusion fashions and integrates them into the music composition workflow, making it appropriate for various music creation eventualities for each rookies and professionals”.

The official Seed-Music web site incorporates a variety of audio clips that display what it could do.

You’ll be able to hear a few of that, beneath:

Extra necessary, although, is how Seed-Music was constructed.

Fortunately, the Duobao Staff has printed a tech report that explains the inside workings of their Seed-Music challenge.

MBW has learn it cowl to cowl.

Within the introduction to ByteDance’s analysis paper, which you’ll be able to learn in full right here, the corporate’s researchers state that, “music is deeply embedded in human tradition” and that “all through human historical past, vocal music has accompanied key moments in life and society: from love calls to seasonal harvests”.

“Our aim is to leverage fashionable generative modeling applied sciences, to not exchange human creativity, however to decrease the obstacles to music creation.”

ByeDance analysis paper for Seed-Music

The intro continues: “Right now, vocal music stays central to world tradition. Nevertheless, creating vocal music is a posh, multi-stage course of involving pre-production, writing, recording, modifying, mixing, and mastering, making it difficult for most individuals.”

“Our aim is to leverage fashionable generative modeling applied sciences, to not exchange human creativity, however to decrease the obstacles to music creation. By providing interactive creation and modifying instruments, we goal to empower each novices and professionals to interact at totally different levels of the music manufacturing course of.”

How Seed-Music works

ByteDance’s researchers clarify that the “unified framework” behind Seed-Music “is constructed upon three basic representations: audio tokens, symbolic tokens, and vocoder latents”, which every correspond to “a technology pipeline.”

The audio token-based pipeline, as illustrated within the chart beneath, works like this: “(1) Enter embedders convert multi-modal controlling inputs, comparable to music model description, lyrics, reference audio, or music scores, right into a prefix embedding sequence. (2) The auto-regressive LM generates a sequence of audio tokens. (3) The diffusion transformer mannequin generates steady vocoder latents. (4) The acoustic vocoder produces high-quality 44.1kHz stereo audio.”

In distinction to the audio token-based pipeline, the symbolic token-based Generator, which you’ll be able to see within the chart beneath, is “designed to foretell symbolic tokens for higher interpretability”, which the researchers state is “essential for addressing musicians’ workflows in Seed-Music”.

Based on the analysis paper, “Symbolic representations, comparable to MIDI, ABC notation and MusicXML, are discrete and could be simply tokenized right into a format suitable with LMs”.

ByteDance’s researchers add within the paper: “Not like audio tokens, symbolic representations are interpretable, permitting creators to learn and modify them instantly. Nevertheless, their lack of acoustic particulars means the system has to rely closely on the Renderer’s means to generate nuanced acoustic traits for musical efficiency. Coaching such a Renderer requires large-scale datasets of paired audio and symbolic transcriptions, that are particularly scarce for vocal music.”

The plain query…

By now, you’re in all probability asking the place The Beatles and Michael Jackson’s music come into all of this.

We’re practically there. First, we have to discuss MIRs.

Based on the Seed-Music analysis paper, “to extract the symbolic options from audio for coaching the above system,” the group behind the tech used varied “in-house Music Info Retrieval (MIR) fashions”.

Based on this very clear rationalization over at Dataloop, MIR “is a subcategory of AI fashions that focuses on extracting significant data from music information, comparable to audio alerts, lyrics, and metadata”.

Aka: It’s a metadata scraper. Stick a track into the jaws of a MIR mannequin, and it’ll analyze, predict and current information that may embody pitch, beats-per-minute (BPM), lyrics, chords, and extra.

Music Info Retrieval analysis first gained reputation over its means to assist with the digital classification of genres, moods, tempos, and so on. – key constructing blocks for suggestion methods utilized by music streaming companies.

Now, although, main generative AI music platforms are reportedly utilizing MIR analysis to enhance their product output.

Are you able to see the place that is going? Sure, in fact.

ByteDance’s analysis group has efficiently constructed its personal in-house MIR fashions, which have been utilized by the ByteDance group to “extract the symbolic options from audio” to construct components of its Seed-Music system. These MIR fashions embody:

AI, are you okay? Are you okay, AI?

Taking a deeper dive into the analysis printed by ByteDance for its Structural evaluation-focused MIR mannequin, we discover a analysis paper titled:

‘To catch a refrain, verse, intro, or anything: Analyzing a track with structural capabilities’.

It was printed in 2022. You can learn it right here.

Based on the paper: “Standard music construction evaluation algorithms goal to divide a track into segments and to group them with summary labels (e.g., ‘A’, ‘B’, and ‘C’).

“Nevertheless, explicitly figuring out the operate of every phase (e.g., ‘verse’ or ‘refrain’) is never tried, however has many functions”.

On this analysis paper, they “introduce a multi-task deep studying framework to mannequin these structural semantic labels instantly from audio by estimating ‘verseness,’ ‘chorusness,’ and so forth, as a operate of time”.

To conduct this analysis, the ByteDance group used 4 “public datasets”, together with one known as the ‘Isophonics’ dataset, which, it notes, “incorporates 277 songs from The Beatles, Carole King, Michael Jackson, and Queen.”

The supply of the Isophonics dataset utilized by ByteDance’s researchers seems to be Isophonics.web, described as the house for software program and information assets from the Centre for Digital Music (C4DM) at Queen Mary, College of London.

The Isophonics web site notes that its “chord, onset, and segmentation annotations have been utilized by many researchers within the MIR neighborhood.”

The web site explains that “the annotations printed right here fall into 4 classes: chords, keys, structural segmentations, and beats/bars”.

In 2022, ByteDance’s researchers printed a video presentation of their, To catch a refrain, verse, intro, or anything: Analyzing a track with structural capabilities paper for the Worldwide Convention on Acoustics, Speech, and Sign Processing (ICASSP).

You’ll be able to see this presentation beneath.

The video’s caption describes a “novel system/technique that segments a track into sections comparable to refrain, verse, intro, outro, bridge, and so on”.

It demonstrates its findings associated to songs by the Beatles, Michael Jackson, Avril Lavigne and different artists:

We should be cautious right here over any suggestion that ByteDance’s AI music-generating expertise could have been “educated” utilizing songs by standard artists just like the Beatles or Michael Jackson.

But, as you possibly can see, a dataset containing annotations of such songs has clearly been used as part of a ByteDance analysis challenge on this discipline.

Any evaluation or reference to standard songs and their annotations in analysis carried out or funded by a multi-billion-dollar expertise firm will certainly increase a variety of questions for the music {industry} – particularly these employed to guard its copyrights.

“We firmly imagine that AI applied sciences ought to assist, not disrupt, the livelihoods of musicians and artists. AI ought to function a software for creative expression, as true artwork all the time stems from human intention.”

ByteDance’s Seed-Music researchers

There’s a part devoted to Ethics and Security on the backside of ByteDance’s Seed-Music analysis paper.

Based on ByteDance’s researchers, they “firmly imagine that AI applied sciences ought to assist, not disrupt, the livelihoods of musicians and artists“.

They add: “AI ought to function a software for creative expression, as true artwork all the time stems from human intention. Our aim is to current this expertise as a possibility to advance the music {industry} by decreasing obstacles to entry, providing smarter, quicker modifying instruments, producing new and thrilling sounds, and opening up new potentialities for creative exploration.”

The ByteDance researchers additionally define moral points particularly: “We acknowledge that AI instruments are inherently vulnerable to bias, and our aim is to supply a software that stays impartial and advantages everybody. To realize this, we goal to supply a variety of management components that assist decrease preexisting biases.

“By returning creative selections to customers, we imagine we will promote equality, protect creativity, and improve the worth of their work. With these priorities in thoughts, we hope our breakthroughs in lead sheet tokens spotlight our dedication to empowering musicians and fostering human creativity by AI.”

When it comes to Security / ‘deepfake’ issues, the researchers clarify that, “within the case of vocal music, we acknowledge how the singing voice evokes one of many strongest expressions of particular person id”.

They add: “To safeguard in opposition to the misuse of this expertise in impersonating others, we undertake a course of much like the security measures specified by Seed-TTS. This entails a multistep verification technique for spoken content material and voice to make sure the enrollment of audio tokens incorporates solely the voice of licensed customers.

“We additionally implement a multi-level water-marking scheme and duplication checks throughout the generative course of. Trendy methods for music technology could basically reshape tradition and the connection between creative creation and consumption.

“We’re assured that, with robust consensus between stakeholders, these applied sciences will and revolutionize music creation workflow and profit music novices, professionals, and listeners alike.”Music Enterprise Worldwide